Series: Security Governance That Actually Works, Introduction

Every organisation I’ve worked with has an ISMS. Most of them don’t have a management system.

They have policies. They have a risk register. They have an audit schedule and a certificate on the wall. What they don’t have is a system that drives security decisions, allocates resources based on actual risk, or gives leadership genuine visibility into their security posture. The ISMS exists for the auditor. Security decisions get made somewhere else, informally, inconsistently, and without accountability.

The certificate-focused model worked for years. ISO 27001 asked organisations to demonstrate that a management system existed, and the industry responded by building systems optimised for that demonstration. Policies got written. Registers got populated. Governance meetings got scheduled. The certificate confirmed that all of this was in place, and everyone moved on.

What’s changed is that compliance is no longer enough. NIS2, DORA, and sector-specific regulations now require evidence that risk information drives decisions, not just evidence that a process exists. The test has shifted from ‘do you have a risk register?’ to ‘has your risk register changed how you allocate resources?’ That’s a different question, and most ISMS programmes can’t answer it.

For practitioners, this regulatory shift is useful leverage. It turns ‘we should do risk management properly’ from an internal argument into an external requirement with consequences. But to use that leverage, you need to understand what a functioning ISMS actually looks like, and where the typical certificate-focused version falls short.

Seven capabilities, one system

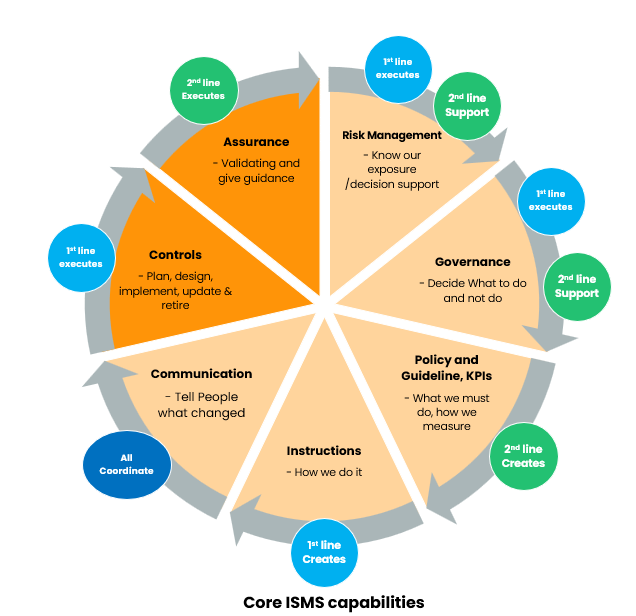

An ISMS that works as a management system needs to address seven capability areas. These map to what a management system actually needs to do: understand what it’s managing (risk management), decide what to do about it (governance), set expectations (policy and guidelines), translate those into practice (instructions), keep people informed (communication), implement the actual measures (controls), and verify that the whole thing works (assurance).

I’ve mapped these as a wheel because none stands alone.

Each capability depends on and reinforces the others. Weakness in one degrades the rest. The portfolio section explores each capability in depth.

Certificate-focused vs risk-based: what changes

The table below contrasts what each capability typically looks like in a certificate-focused ISMS with what it delivers when anchored to mission-critical business objectives and genuine risk-based decision-making.

| Capability | Certificate-focused (typical) | Risk-based (objective-centric) |

|---|---|---|

| Risk management | Risk-list ERM: collect risks, score on a matrix, send a heat map to ERM. Nothing comes back. Risk owners are analysts with no budget authority. Risk appetite defined as ‘low for cyber risk’, meaning nothing operationally | Objective-centric: risk/uncertainty assessment anchored to mission-critical business objectives. The question is ‘how certain are we of achieving objective X?’, not ‘what could go wrong?’ Risk owners are the people who own the objective and can allocate resources. Quantified in financial terms using frameworks like FAIR |

| Governance | Governance bodies receive, note, and file. Charters say ‘monitor’ without specifying decisions. Management does not disclose real risk status, the body does not ask for it. Sourcing decisions happen without security input | Governance bodies make resource allocation decisions based on certainty status linked to objectives. Charters define decision authority. The ‘don’t tell / don’t ask’ pattern is broken by requiring management to disclose uncertainty and requiring the body to act on it |

| Policy and guidelines | Dozens of policies nobody reads, dated for audit cycles. Policy, guideline, and instruction distinctions confused. Metrics measure control implementation percentages and training completion | Policy answers WHY (risk appetite translated to expectations), guidelines answer WHAT (requirements to meet). Metrics measure outcomes tied to business objectives, not compliance artefact completion |

| Instructions | Disconnected from policies. Written by second line, ignored by first line because they don’t fit workflows. Updates don’t propagate | First line writes instructions because they do the work. Second line validates against requirements. Integrated with existing workflows, not parallel to them |

| Communication | Annual awareness training, reactive incident emails. Board gets a quarterly slide deck of metrics nobody acts on. Information doesn’t flow between levels | Three deliberate levels: strategic (board, objective certainty, investment rationale), tactical (management, treatment progress, resource needs), operational (teams, procedures, changes) |

| Controls | Implemented in isolation to satisfy audit requirements. Success measured by implementation percentage. No connection between controls and the risks or objectives they address. Controls that don’t work stay because removing them creates audit findings. Supplier controls are generic and unverified | Controls integrated across capabilities: linked to the risks they treat, the policies they implement, and the objectives they protect. Assessed on both compliance and risk reduction effectiveness. Lifecycle managed, from design to retirement. Enterprise capabilities reused, not duplicated system by system. Supplier obligations are concrete and measurable |

| Assurance | Annual audit. Between audits, nothing systematic. ‘How do we know our controls work?’ ‘We don’t, until the next audit tells us they didn’t’ | Continuous second line oversight, proportionate to risk. Validates that controls work as intended and that certainty information reaching decision-makers is accurate |

The left column is what most organisations have. It passes audits. It does not change how anyone makes decisions.

The right column is what the standard actually intends, and what regulations like NIS2 now require: a management system where risk information, anchored to what the organisation is trying to achieve, drives resource allocation and strategic priorities.

Why none of this stands alone

These seven capabilities form a system. Pull one out and the rest degrade.

Risk management without governance produces assessments nobody acts on. Governance without risk input makes decisions in the dark. Policy without instructions creates expectations that never translate to practice. Instructions without communication leave teams working from outdated procedures. Controls without assurance give false confidence. Assurance without policy has no standard to measure against.

The certificate-focused ISMS typically builds these capabilities as separate workstreams, each satisfying its own section of the standard. The result is seven silos that individually pass audits but don’t function as a system. The risk-based approach builds them as interconnected capabilities, each feeding the others, because that’s what a management system actually means.

The regulatory pressure

NIS2 changes the equation. Article 21 requires cybersecurity measures that are ‘appropriate and proportionate to the risks presented’. Article 20 places accountability on management bodies and requires them to approve measures and oversee implementation. DORA imposes similar expectations in financial services.

These regulations don’t ask whether you have an ISMS. They ask whether your ISMS works. Whether risk management drives decisions. Whether governance bodies exercise real oversight. Whether controls are proportionate to actual risk. Whether assurance validates effectiveness, not just existence.

For organisations still running a certificate-focused ISMS, this is the forcing function. The certificate alone no longer satisfies the regulatory expectation. The management system has to function as one.

What the series covers

The articles in this series examine each capability in depth: the typical state, why it persists, and what risk-based practice looks like in reality.

Risk management starts with why your risk register doesn’t drive decisions and what happens when the only genuine risk-based decision is the cyber insurance renewal. Governance examines how to build an ISMS through delivery rather than documentation. Controls looks at what happens when security requirements meet outsourcing contracts. Assurance explores what second line work actually involves when it moves beyond the audit cycle.

These are practitioner perspectives drawn from multiple organisational contexts across private and public sectors. What works, what doesn’t, and what you can do about it.

This is the introductory article in the ‘Security Governance That Actually Works’ series. The portfolio explores each capability with deeper articles on specific challenges and approaches.

I advise manufacturing companies on security governance and risk management. If this resonates with challenges in your organisation, get in touch.